Online Audio Spaces Update: New Features for Virtual Event Organizers

It’s been about 8 weeks since we launched High Fidelity’s new audio spaces in beta. We really appreciate all the support, particularly if you have ...

VR is about doing, not just seeing. New standalone devices coming this summer will offer 18 degrees of freedom (18DoF) and are likely to change the growth rate of the industry.

When we use our computers, we are very often trying to reach “inside” them to make something—like editing a document, creating a drawing or diagram, or building in Minecraft. And when we try to reach into the computer, our hands are blocked by the screen, the keyboard, and the mouse.

The mouse was a revolution. Before it, we had only buttons with which to slowly convey our intentions to the machine. Our human minds are analog—we think in continuous curves—the pen held in the hand. We think the way we move, along ballistic curves through spacetime. And in these curves, there is the idea of degrees of freedom: the number of ways an object can move through a 3D space.

One degree of freedom is a slider, up and down. Still immensely better than the keyboard. Our very first computer pong games with their potentiometer knobs were delightful—we could finally MOVE, albeit only along a single line.

Photo credit: ATARI

Photo credit: ATARI

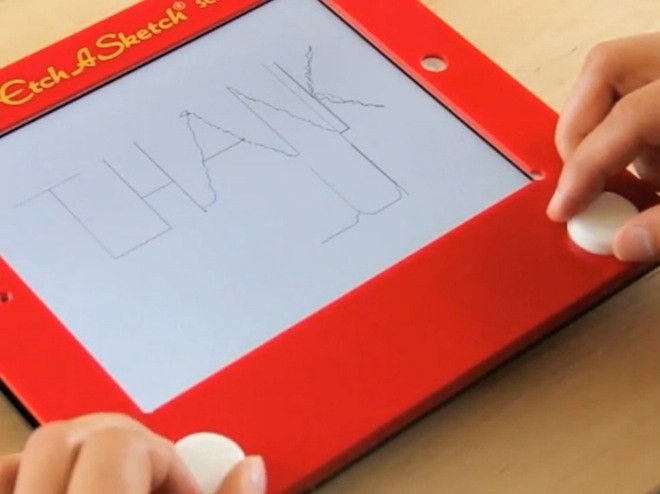

Next was the mouse, its two degrees of freedom enough to roughly sketch out a circle. The core offering of the MacIntosh computer was nothing more (or less) than this new freedom provided by two degrees: Up/down, plus left/right. We could see a window across the screen and deftly grab and slide it over another—paradise. Think of how hard it is to use the Etch-A-Sketch as a drawing tool...a measure of how bad we are at adapting to the wrong interface:

Photo credit: Etch-A-Sketch

But the richness of human existence — that takes even more than a mouse. And as we began trying to model things in 3D, the limits of that mouse became glaring. Two degrees of freedom is like a slice through the 3D universe — a single plane along which all movement must occur. And so 3D sculptors had to learn a complex language of chords — fingers on keys orienting the plane, which the mouse then moved within.

Engineers tried to break free of those two degrees—extend them to 2.x—with gadgets like Space Navigators and Wacom tablets and even the latest iPhone’s pressure sensitivity. But two degrees plus some fractions is still not natural for us.

And now consider the simple motion of your hand, in lifting a coffee cup from the table to drink: How many degrees of freedom is that? Well, imagine the very center of the cup, lifting up and a bit left, and toward you as it rises to your mouth — three degrees of freedom, in X/Y/Z. But that isn’t all — it must also turn on its axis so that the handle pivots to be at your cheek, and then tilt to pour the coffee into your mouth. That rotation is another three degrees of freedom, separate from position. So that is six, and all with just one hand!

But the other hand might be moving and holding something as well, and the moving head, ever shifting the camera of the eyes to better gauge distances, is another six in the same way. All together that is 6 + 6 + 6 = 18 degrees of freedom — nine times more than the once-trans-formative mouse!

If you can deliver 18 degrees of freedom there is suddenly no learning necessary. Picking up an apple is just like… picking up an apple. Until now, there has never been a way to use a computer to create something that did not require a substantial learning curve. This disregard for the cost of learning a new or complex skill is something we (and investors) often forget: Making something easy is a lot better than making it better.

For example, there are numerous remotely-operated robotic devices out there that you mostly don’t know about (except, sadly, military drones). There are many robotic surgery devices, for example. But these devices all are controlled by operators who have to learn to use them with less than 18 degrees of freedom (18DoF) and therefore require huge amounts of training. Think how it would be different if you put on a VR headset and could look through the eyes of a humanoid robot and look down at your hands — and find them there. These 18DoF interfaces (head and hands) will let people with no new training teleport themselves into robots far away, for things like teaching or rescue operations.

And if 18DoF isn’t enough for you (and for many people it isn’t—consider playing a piano, with each finger moving independently), you are in luck. The next generation of VR devices will optically track your fingers, too, allowing you to put down the controllers and talk with your hands.

VR is still waiting for its iPhone moment, and there are numerous reasons why it hasn’t happened. But the simplest explanation is that the first 18DoF devices (the Rift and Vive and Windows Mixed Reality) require a gaming PC along with substantial setup and installation to use. They are research devices for developers, not consumer VR. There are standalone devices (most notably the Oculus Go) that are easy to use and at a consumer price point, but these devices are not 18DoF—the Oculus Go is 5DoF (three for the head, two for the handheld controller).

But the Oculus Quest, almost certainly launching before summertime at a $400 price, has 18DoF in its wireless hand controllers and wireless headset. And the HTC Vive Focus also has 18DoF, launching very soon as well. These devices will be the first to offer the full control of motion needed to achieve a true VR experience. When 18DoF becomes standard in all VR hardware, we’ll reach a breakthrough in VR usability. Then we will start applying virtual reality to the most human experiences out there.

Related Article:

.jpg)

by Ashleigh Harris

Chief Marketing Officer

It’s been about 8 weeks since we launched High Fidelity’s new audio spaces in beta. We really appreciate all the support, particularly if you have ...

Subscribe now to be first to know what we're working on next.

By subscribing, you agree to the High Fidelity Terms of Service