Online Audio Spaces Update: New Features for Virtual Event Organizers

It’s been about 8 weeks since we launched High Fidelity’s new audio spaces in beta. We really appreciate all the support, particularly if you have ...

Strangely enough, we added exactly 100 to last month’s record.

This is the third post in a series of posts that recap the results of monthly load tests High Fidelity conducts that are designed to push the limits of our platform’s capabilities as we work to build a technology that will reach Internet-scale and someday support a billion users. It’s early days.

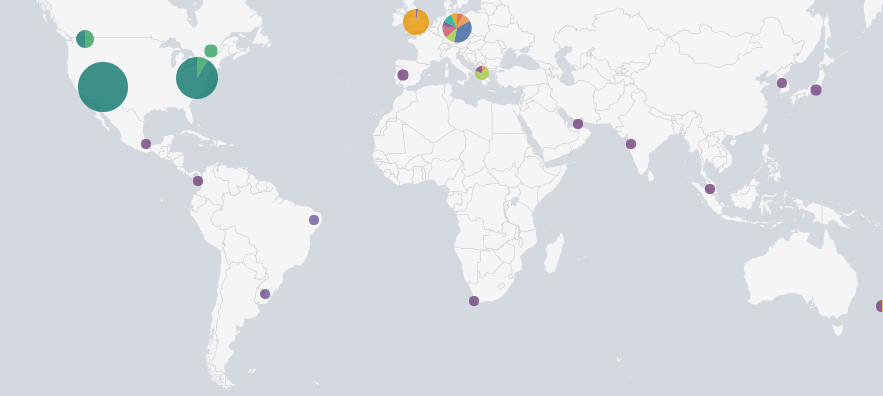

In the most recent load test we did a trial of a new live trivia (beta) game, I got to do more crowdsurfing, and we definitely found a few bugs. But the latest development servers were able to deliver solid audio and avatar data to 356 people from 43 different countries for a total of more than 2 gigabits-per-second of network traffic.

Close your eyes and imagine you are standing at the center of Grand Central station in New York. Can you hear it? There are high-heeled shoes, reverberating off the distant marble walls. You can make out maybe 10 different conversations near you, all in different directions. A kid runs by. There is the sound of a coffee machine frothing milk. And the background murmur of many voices you can’t make out. Now open your eyes: everything is in motion. A big group is coming together up out of a subway tunnel. Two people stand gesturing as they speak to each other at the railing of the mezzanine. Waves of motion crossing you as commuters walk to work. Hundreds of people.

Online gaming and social media routinely brag about concurrent usage in the hundreds of thousands or millions, and for good reason — people crave shared experiences. But the reality of these experiences is that there are usually only a few other people together in one space and able to communicate live, whether that space is a Discord group, a Fortnight match, or a MMOG server.

Computationally it becomes quadratically harder as each new person enters a space to allow all the others to experience each other in real time.

Ten people together means that 100 computations are needed to support them, but 100 people means 10,000! With audio, for example, each listener needs to receive a separate stream for each ear, mixed from only the sources near enough for them to hear. At Grand Central station, you can hear hundreds of people at once — meaning hundreds of thousands of computations. Now you hopefully start to see how these load tests break new ground.

Similarly, the representation of avatar body and joint movements needs to be realistic so that, for example, you can see people “do the wave.” It only works if we all move in sync. And a crowd is way too much data to send — we have to compute only the information you need to see. You need more data for the people closest to you, less for those further away or out of view.

This is the record that we are shooting for every month. To our knowledge, no-one has gathered as many people together in this way. If anyone knows of a game or platform that has actually demonstrated this sort of scale, please do let me know. There are some projects targeting this kind of capability, but none I have actually seen working.

If you were there, you saw three big bugs that we have since fixed in the lab for the next test: First, many people saw only some of the avatars, with the rest being represented as purple balls. Second, through some of the event, the audio servers would reset every minute or so and create a few seconds of silence as they restarted. And third, when we all shouted at the same time, the audio servers broke up for a bit. Also, the movements of the bodies of avatars weren’t smooth enough — the ones near your center of view will be much smoother at the next load test event.

When I jumped off-stage to wander around and make friends with everyone, I met a lot of interesting new people from far away, which is the kind of thing that really keeps you getting up early to come to work on this stuff. For example, I met a group of friends from Russia, Belarus, and the Ukraine who jumped in together for the first time, one of whom had full VR body tracking. I met others from Japan, Finland, and New Zealand.

We know people want “more things to do” in VR, so to further stress the system we’re using the load test events as a fun way to test out some interactive content. Our team has been working on a live trivia game. Here’s how it works: A group of avatars crowds onto a giant game board. After the question is read, each avatar has to move on to the floor tile representing the correct answer. Anyone not on the right square is teleported off the game board. This revealed some bugs related to scripting load and level-of-detail, but was still good fun and suggests that some sort of live sudden-death elimination experience is something we’ll see more of in VR.

We’re calling this live trivia game “Last Avatar Standing.” Check out our Events page on the website for times, or look for it to be featured in the GoTo menu.

We estimate that the maximum number of people that can be serviced by a single server running the biggest hardware that can be rented on a cloud server will be around 500. We hope to demonstrate that at next month’s test on October 6, at 11am PDT. But, as others have also pointed out, the more important ‘metaverse’ goal is to decentralize the servers so that many machines can be used to simulate spaces of infinite size and with any number of people in them together. And this is exactly the architecture we are building for High Fidelity and will start deploying and testing after we optimize single-server performance.

More specifically, the design of High Fidelity allows domains (a virtual world or environment) to be of any size, with their internal regions partitioned to be serviced by many different servers, each with the capacity that we have been load testing. As you move around in those domains you will be switched from server to server, and those servers can be nested together into an architecture allowing distant sounds, people, or objects to be approximated by servers at higher layers in the tree. Additionally, servers can register to provide service for domains, and if you saw the servers restarting at our event you were actually seeing that tech in action — new servers being “hot-swapped” for the old. This will allow large groups of people to contribute their machines to each other to create enormous spaces for large communities. And our blockchain-based currency will allow people to pay each other for sharing their machines in this way if desired.

Our next load test is scheduled for Saturday, October 6, at 11:00am PDT, to hopefully make it easier for more users to participate and draw a larger crowd. I warmly invite you to join us. It’s a chance to be part of a historic change in human communication, and an experience which is like no other.

Related Article:

.jpg)

by Ashleigh Harris

Chief Marketing Officer

It’s been about 8 weeks since we launched High Fidelity’s new audio spaces in beta. We really appreciate all the support, particularly if you have ...

Subscribe now to be first to know what we're working on next.

By subscribing, you agree to the High Fidelity Terms of Service